-

IEEE International Conference on Robotics and Automation (ICRA 2024)

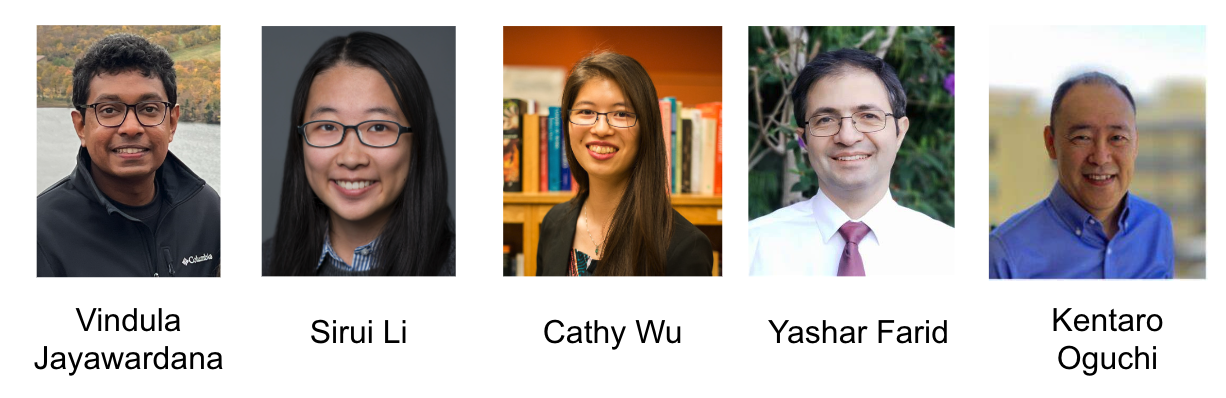

- Vindula Jayawardana1, 2

- Sirui Li1

- Cathy Wu1

- Yashar Farid2

- Kentaro Oguchi2

- 1 MIT

- 2 Toyota Motor North America

Conventional control, such as model-based control, is commonly utilized in autonomous driving due to its efficiency and reliability. However, real-world autonomous driving contends with a multitude of diverse traffic scenarios that are challenging for these planning algorithms. Model-free Deep Reinforcement Learning (DRL) presents a promising avenue in this direction, but learning DRL control policies that generalize to multiple traffic scenarios is still a challenge. To address this, we introduce Multi-residual Task Learning (MRTL), a generic learning framework based on multi-task learning that, for a set of task scenarios, decomposes the control into nominal components that are effectively solved by conventional control methods and residual terms which are solved using learning. We employ MRTL for fleet-level emission reduction in mixed traffic using autonomous vehicles as a means of system control. By analyzing the performance of MRTL across nearly 600 signalized intersections and 1200 traffic scenarios, we demonstrate that it emerges as a promising approach to synergize the strengths of DRL and conventional methods in generalizable control.

We address the challenge of algorithmic generalization\(^1\) in Deep Reinforcement Learning (DRL). Formally, we aim to solve Contextual Markov Decision Processes (CMDPs) \(^2\). We introduce Multi-residual Task Learning (MRTL), a generic framework that combines DRL and conventional control methods. MRTL divides each scenario into parts solvable by conventional control and residuals solvable by DRL. The final control input for a scenario is thus the superposition of these two control inputs. We learn multiple residual terms across scenarios by conditioning the residual function on each sceanario.

To put this formally, MRTL is concerned with augmenting a given nominal policy \(\pi_n(s, c): \mathcal{S} \times \mathcal{C} \rightarrow \mathcal{A}\) by learning residuals on top of it. In particular, we aim to learn the MRTL policy \(\pi(s, c): \mathcal{S} \times \mathcal{C} \rightarrow \mathcal{A}\) by learning a residual function \(f_\theta(s, c): \mathcal{S} \times \mathcal{C} \rightarrow \mathcal{A}\) on top of a given nominal policy \(\pi_n(s, c): \mathcal{S} \times \mathcal{C} \rightarrow \mathcal{A}\) such that,

\(\pi(s, c)=\pi_n(s, c)+f_\theta(s, c)\)

The gradient of the \(\pi\) does not depend on the \(\pi_n\). This enables flexibility with nominal policy choice.

@inproceedings{jayawardana@mrtl,

title={Generalizing Cooperative Eco-driving via Multi-residual Task Learning},

author={Jayawardana, Vindula and Li, Sirui and Wu, Cathy and Farid, Yashar and Ouguchi, Kentaro},

booktitle={International Conference on Robotics and Automation (ICRA)},

year={2024}

}

|

|